- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

Sure you do. It’s not at all a transparent attempt to prolong the bubble.

I only have a rather high level understanding of current AI models, but I don’t see any way for the current generation of LLMs to actually be intelligent or conscious.

They’re entirely stateless, once-through models: any activity in the model that could be remotely considered “thought” is completely lost the moment the model outputs a token. Then it starts over fresh for the next token with nothing but the previous inputs and outputs (the context window) to work with.

That’s why it’s so stupid to ask an LLM “what were you thinking”, because even it doesn’t know! All it’s going to do is look at what it spat out last and hallucinate a reasonable-sounding answer.

I agree, ut not because of lost state. As mentioned by others, state can be managed. You could also just do a feedback loop. These improve, but don’t solve. The issue is that it doesn’t understand. You mention that it is just a word predictor. And that is the heart of it. It predicts based on odds more or less, not on understanding. That said, it has room to improve. I think having lots and lots of agents that are highly specialized is probably the key. The more narrow the focus, the closer prediction comes to fact. Then throw in asking 5 versions of the agent the same question and tossing the outliers and you should get pretty useful. Not AGI, but useful. The issue is that with current technology, that is simply too expensive. So a breakthrough in the expense of current AI is needed first, then we can get more useful AI. AGI will be a significantly different technology.

The conversion of the output to tokens inherently loses a lot of the information extracted by the model and any intermediate state it has synthesized (what it “thinks” of the input).

Until the model is able to retain its own internal state and able to integrate new information into that state as it receives it, all it will ever be able to do is try to fill in the blanks.

Not sure what this internal state you are referring to is. Are you talking about all the values that come out of each step of the computations?

As for your second half… integration. That is a tricky one. Because the inputs it is getting aren’t necessarily correct. So that can do more harm than good. The current loop for integrating new data is too long though. They need to reduce that down to like an hour so it can absorb current events at least. And ideally they would be able to take a conversation and identify what worked and what didn’t. Then integrate what did. This is what was mentioned about claud.md files and such that essentially keep track of wwhat was learned. There is room for improvement there, as I seem to have to tell the model to go read those or it doesn’t.

Not sure what this internal state you are referring to is. Are you talking about all the values that come out of each step of the computations?

It would need to be able to form memories like real brains do, by creating new connections between neurons and adjusting their weights in real time in response to stimuli, and having those connections persist. I think that’s a prerequisite to models that are capable of higher-level reasoning and understanding. But then you would need to store those changes to the model for each user, which would be tens or hundreds of gigabytes.

These current once-through LLMs don’t have time to properly digest what they’re looking at, because they essentially forget everything once they output a token. I don’t think you can make up for that by spitting some tokens out to a file and reading them back in, because it still has to be human-readable and coherent. That transformation is inherently lossy.

This is basically what I’m talking about:

But for every single token the LLM outputs. The fact that it’s allowed to take notes is a mitigation for this context loss, not a silver bullet.

Yeah, I get what you are saying. I’m just not convinced that it needs to be able to update it’s model in real time to be capable of high level reasoning. And while human readable files are inherantly lossy, they do still represents tracking an internal state.

They also have vector dbs. My understanding is that they are closer to what you are talking about as far as internal state. But they still don’t allow th AI to update the vectordb in real time. Mainly they worry about what happens with live updates being similar to how people are easily manipulated into believeing BS. So they are more careful about what they feed it to update. I do wonder how they generate those vector dbs, and if that is something users could utilize locally.

There’s no reason an LLM couldn’t be hooked up to a database, where it can save outputs and then retrieve them again to “think” further about them. In fact, any LLM that can answer questions about previous prompts/responses has to be able to do this. If you prompted an LLM to review all of it’s database entries, generate a new response based on that data, then save that output to the database and repeat at regular intervals, I could see calling that a kind of thinking. If you do the same process but with the whole model and all the DB entries, that’s in the region of what I’d call a strange loop. Is that AGI? I don’t think so, but I also don’t know how I would define AGI, or if I’d recognize it if someone built it.

If you prompted an LLM to review all of it’s database entries, generate a new response based on that data, then save that output to the database and repeat at regular intervals, I could see calling that a kind of thinking.

That’s kind of what the current agentic AI products like Claude Code do. The problem is context rot. When the context window fills up, the model loses the ability to distinguish between what information is important and what’s not, and it inevitably starts to hallucinate.

The current fixes are to prune irrelevant information from the context window, use sub-agents with their own context windows, or just occasionally start over from scratch. They’ve also developed conventional

AGENTS.mdandCLAUDE.mdfiles where you can store long-term context and basically “advice” for the model, which is automatically read into the context window.However, I think an AGI inherently would need to be able to store that state internally, to have memory circuits, and “consciousness” circuits that are connected in a loop so it can work on its own internally encoded context. And ideally it would be able to modify its own weights and connections to “learn” in real time.

The problem is that would not scale to current usage because you’d need to store all that internal state, including potentially a unique copy of the model, for every user. And the companies wouldn’t want that because they’d be giving up control over the model’s outputs since they’d have no feasible way to supervise the learning process.

Yeah I think for it to be a proper strange loop (if that is indeed a useful proxy for consciousness-- I think there’s room for debate on that) it would need to be able to take it’s entire “self” i.e. the whole model, weights, and all memories, as input in order to iterate on itself. I agree that it probably wouldn’t work for the current commercial applications of LLMs, but it not what being what commercial LLMs do, doesn’t mean it couldn’t be done for research purposes.

You seem to know more than me so can I ask you a question? I have a general sense of what the context window is / means. But why is it so small when the model is trained on huge, huge amounts of data? Why can the model encompass a whole library of training data but only a very modest context window?

Training data isn’t stored in the model. The model processes that data and uses it to adjust the weights and measures of its parameters (usually several to a hundred billion or more for commercial models), which are divided among several layers, hidden sizes, and attention heads. These weights and the architecture are what are hard-coded into the model during training.

Inferencing is what happens when the model generates text from an input, and at this point the weights and measures are hard-coded so it doesn’t actually retain all that information it was trained on. The context window refers to how many tokens (words or phrases) it can store in its memory at a time.

For every token that it processes, it runs a series of calculations on embedded vectors passes them through several layers in which they’re considered against the context of all the other tokens in the context window. This involves matrix multiplication and is very compute-heavy. Think like 1-4GB of RAM for every billion parameters, plus several more GB of RAM for the context window. There’s just no way it would be able to hold its entire training dataset in RAM at a time.

You would need to integrate retrieval-augmented generation to fetch the relevant data into the context window before generating a response, but that’s not at all the same as containing all that knowledge in a stateful manner

The size of the context window is fixed in the structure of the model. LLMs are still at their core artificial neural networks, so an analogy to biology might be helpful.

Think of the input layer of the model like the retinas in your eyes. Each token in the context window, after embedding (i.e. conversion to a series of numbers, because ofc it’s just all math under the hood), is fed to a certain set of input neurons, just like the rods and cones in your retina capture light and convert it to electrical signals, which are passed to neurons in your optic nerve, which connect to neurons in your visual cortex, each layer along the way processing and analyzing the signal.

The number of tokens in the context window is directly proportional to the number of neurons in the input layer of the model. To make the context window bigger, you have to add more neurons to the input layer, but that quickly results in diminishing returns without adding more neurons to the inner layers to be able to process the extra information. Ultimately, you have to make the whole model larger, which means more parameters, which means more data to store and more processing power per prompt.

Oh… so it’s kind of like taking something that’s few-to-many and making it many-to-many, and the number of connections is what costs you.

That’s what an LLM is, a database of words using vectors.

You’re still limited by the context window in your example, giving it another source of information doesn’t do anything than give more context.

Right, i mean if you made the context window enormous, such that you can include the entire set of embeddings and a set of memories (or maybe, an index of memories that can be “recalled” with keywords) you’ve got a self-observing loop that can learn and remember facts about itself. I’m not saying that’s AGI, but I find it somewhat unsettling that we don’t have an agreed-upon definition. If a for-profit corporation made an AI that could be considered a person with rights, I imagine they’d be reluctant to be convincing about it.

You still lose the internal state between each token in the database output. It would let it plan, but it would still be externalizing that planning, one token at a time. Condensing all of the internal state into a single token at a time still means huge losses in detail as well as fragmentation of responses, resulting in all the problems that you see with LLMs.

Somehow the actual internal state needs to not only be preserved, but fed back into itself. That’s how brains work. Condensing it into tokens isn’t enough.

LLMs aren’t AI, let alone AGI.

They’re fucking prediction engines with extra functions.

The best description I’ve ever heard of LLMs is “a blurry jpeg of the internet”. From the perspective of data compression and retrieval, they’re impressive… but they’re still a blurry jpeg. The image doesn’t change, you can only zoom in on different parts of it and apply extra filters, and there’s nothing you can truly do about the compression artifacts (what we call “hallucinations”). It can’t think, it can’t learn, it just is, and that’s all it will ever be.

So are we. Your definition of AI also seems off. It’s a field of computer science dealing with seemingly cognitive algorithms. Basically everything that is not rule based programming. I work in AI production since over ten years. It is absolutely valid and necessary to hate AI, but not to deny technical functionality. Also the other answer to your comment: of course training a neural network is a form of learning. Wether it is by reinforcement or by training data. There are many applications of ML since many years before LLMs, it makes no sense to deny that it exists.

What’s your psychology background?

I get that you’re trolling but I don’t understand where you’re coming from. Why psychology?

It’s an industrial sized prediction engine. And when you apply that to bioscience, it predicts things that saves lives.

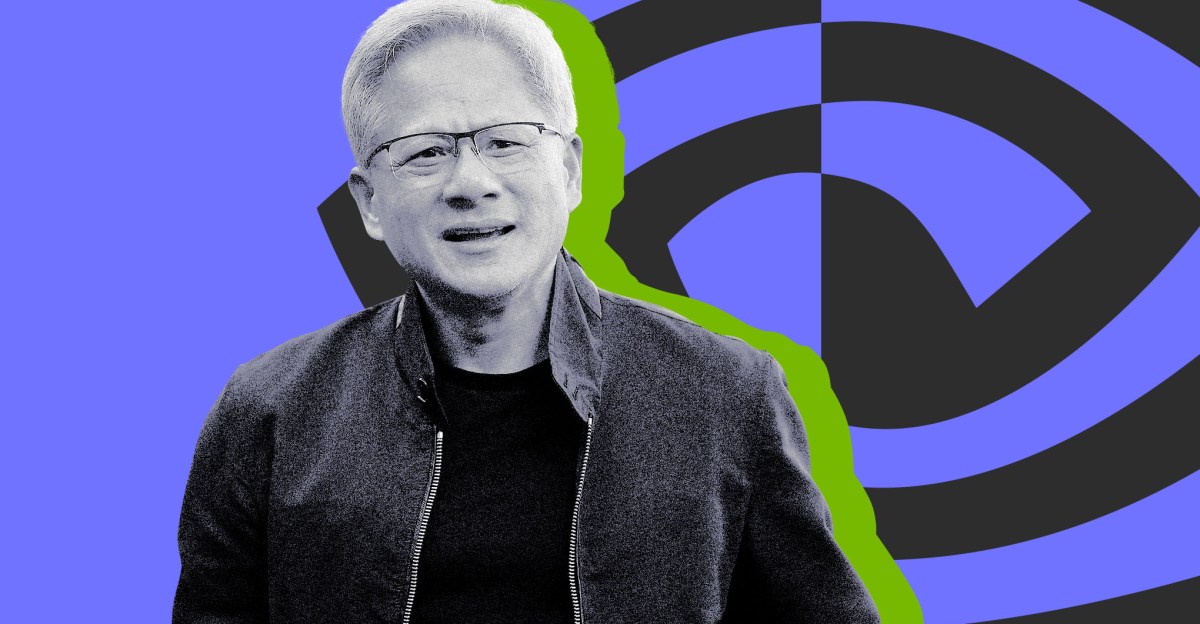

So why do we need Jensen Huang?

Exactly. CEO is maybe the easiest job for an AI to take over, so an AGI is possibly the most perfect candidate for that role.

Put up or shut up, tech bro CEOs. Replace yourself if it’s so fucking amazing.

AIs can’t play golf.

Just replacing one eco horror with another.

Why do we need any of them? They’ve completed the job. All future plans cancelled.

Fridman, the podcast’s host, defines AGI as an AI system that’s able to “essentially do your job,” as in start, grow, and run a successful tech company worth more than $1 billion. He then asks Huang when he believes AGI will be real — asking if it’s, say, five, 10, 15, or 20 years away — and Huang responds, “I think it’s now. I think we’ve achieved AGI.”

So we’ve achieved AGI in the sense that it could replace a nonsensical fart-sniffing clown, hyping a horde of morons into valuating a company at orders of magnitude its actual worth?

I think you’re a bullshitting con artist.

Grifter gonna grift

Geez. You can almost smell the desperation on this guy.

Well, he wears the same leather jacket 24/7 so he can’t smell good.

If I was a NVDA investor, I’d be worried. This clown is doing nothing but gaslighting and lying these days.

But you’re wrong, you’re all wrong!

Average Gaslighting Idiot.

AKA “a CEO.”

Oh yes we have achieved AGI! But what we really need is Artificial General Super Intelligence! Just another trillion and it will be useful bro!

No… you haven’t.

These fuckers will claim whatever nonsense to keep themselves relevant enough to take on more debt before they collapse.

They are going to create a success story where someone becomes a billionaire with an AI doing everything. Then idiots will chase that dream for a hundred years and fill these rich fucks bank accounts.

I agree, they start to sound desperate to keep their current momentum going. I think the bubble will burst soon. Things look solid until they’re not.

Looking at their history they were always able to create markets for their GPUs and AI has been obviously incredible for them. There will be the next hot thing after AI and they’ll try to have that, too. The alternatives to CUDA are not there yet, ROCm is still lacking and fiddly. I see a lot of things happening but NVIDIA collapsing for whatever reason is not part of that.

This guy has completely lost the plot. I don’t think it’s possible to be even more disconnected from reality.

Literally the story above this in my feed is OpenAI shutting down expensive services 😂

You goofy goobers