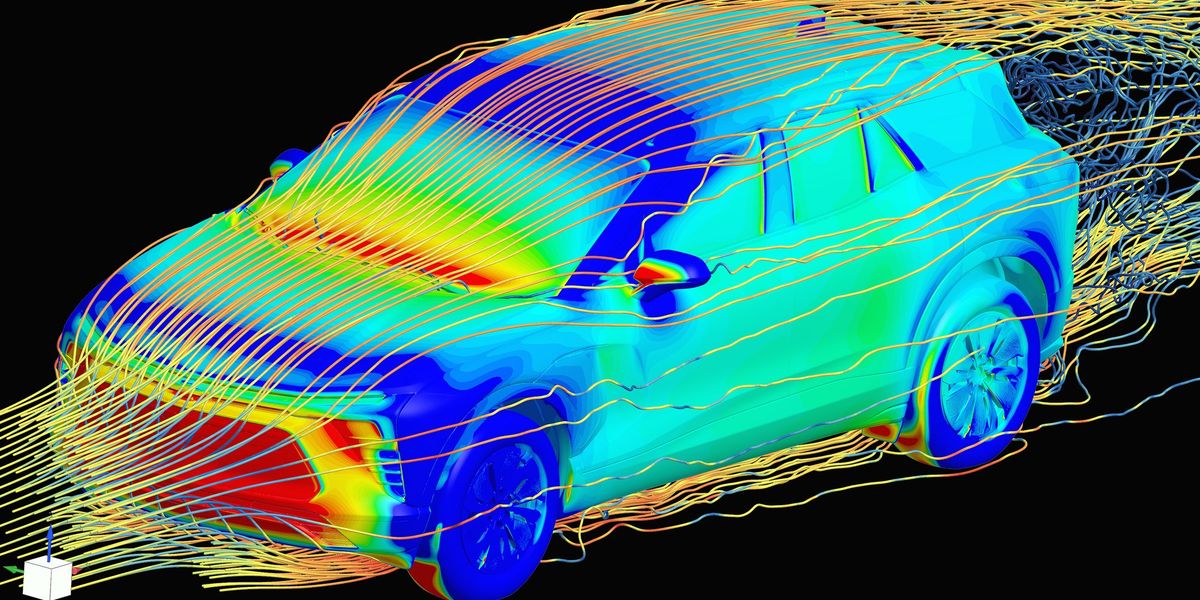

…Previously, a creative design engineer would develop a 3D model of a new car concept. This model would be sent to aerodynamics specialists, who would run physics simulations to determine the coefficient of drag of the proposed car—an important metric for energy efficiency of the vehicle. This simulation phase would take about two weeks, and the aerodynamics engineer would then report the drag coefficient back to the creative designer, possibly with suggested modifications.

Now, GM has trained an in-house large physics model on those simulation results. The AI takes in a 3D car model and outputs a coefficient of drag in a matter of minutes. “We have experts in the aerodynamics and the creative studio now who can sit together and iterate instantly to make decisions [about] our future products,” says Rene Strauss, director of virtual integration engineering at GM…

“What we’re seeing is that actually, these tools are empowering the engineers to be much more efficient,” Tschammer says. “Before, these engineers would spend a lot of time on low added value tasks, whereas now these manual tasks from the past can be automated using these AI models, and the engineers can focus on taking the design decisions at the end of the day. We still need engineers more than ever.”

because it’s not a “creative writing machine”, it’s machine learning that’s been trained to run physics simulations. it has nothing to do with LLMs. we’ve been using systems like this for decades. including ones like Folding@home which have been instrumental in the development of many drug therapies for different illnesses.

your internal biases have clouded your critical thinking skills and your ability to competently and thoughtfully examine information you’re provided has been compromised. in plain english, like the AI techbros you despise, you’ve given up your ability to think.

It’s hardly their fault for thinking it was related to the AI LLM or multimodal models when in all actuality the article states that these “large physics models” may be any sort of configuration, including LLM transformers:

It seemed you really needed to take your frustrations out on someone else’s comment.

The unthinking AI haters are all over social media. They keep saying that AI can’t really think. But ironically, the “arguments” they usually use is the worst kind unthinking regurgitated groupthink slob.

Transformers can’t really think(at least not more then an excel sheet) oversymplified its a stochastic model, it gives propable result.

But that does not make it useless there are many tasks where propable with the right margin of error is good enough.

But there are also tasks where it isnt, even humans also have a chance for error they can be at fault for it / take responsibility.