This is due to phishing attacks and account takeover attempts, not due to the platform itself being insecure.

I mean, it’s not that Signal has security issues per se, but it doesn’t have the German government’s security people with control over what goes into releases, either.

If you remember the wake of Signalgate, the US doesn’t allow use by American officials of Signal to do their communications because they don’t certify it for classified information transmission and do have their own app that officials are supposed to be using.

On March 15, Secretary of Defense Pete Hegseth used the chat to share sensitive and classified details of the impending airstrikes, including types of aircraft and missiles, as well as launch and attack times.[1][2] The name of an active undercover CIA officer was mentioned by CIA director John Ratcliffe in the chat,[3] while Vance and Hegseth expressed contempt for European allies.[4][5]

A forensic investigation by the White House information technology office determined that Waltz had inadvertently saved Goldberg’s phone number under Hughes’ contact information. Waltz then added Goldberg to the chat while trying to add Hughes.[15] Subsequently, investigative journalists reported Waltz’s team regularly created group chats to coordinate official work[16] and that Hegseth shared details about missile strikes in Yemen to a second group chat which included his wife, his brother, and his lawyer.[17]

On March 18, 2025, the Pentagon sent a department-wide memo warning, “Please note: third party messaging apps (e.g. Signal) are permitted by policy for unclassified accountability/recall exercises but are NOT approved to process or store nonpublic unclassified information”—a category whose release would be far less potentially damaging than that about ongoing military operations.[27] A former NSA hacker said that linking Signal to a desktop app is one of its biggest risks, as Ratcliffe suggested he had done.[28]

According to the article, German government information security people do that for Wire:

Klöckner highlighted that Wire is already provided by the Bundestag administration and is certified by Germany’s Federal Office for Information Security (BSI).

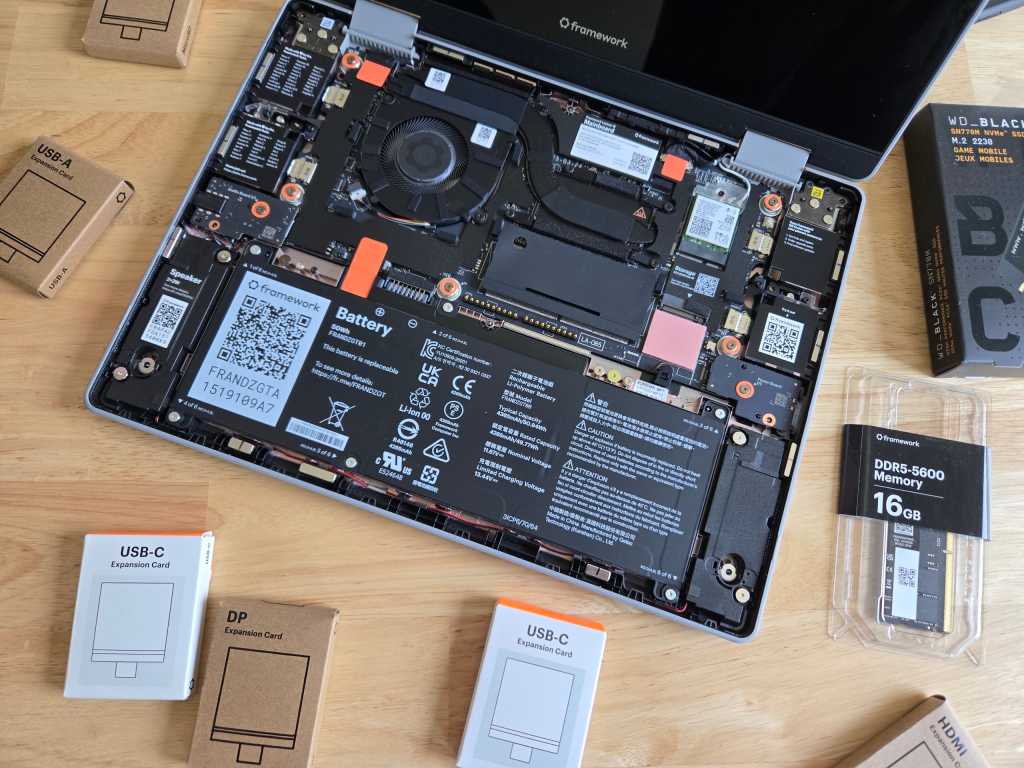

If you’re going to leave your smartphone at home and then take this, and not having the phone with you is your goal, okay, sure.

But if you’re not, you’re just carrying an additional device to do something that the first device is quite capable of handling.