The only reliable anarchists have vaginas.

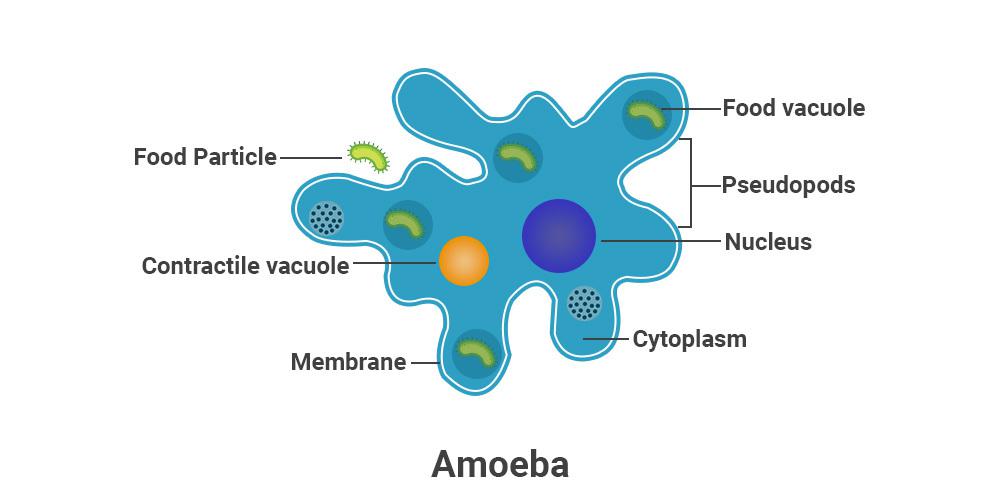

Avid Amoeba

- 59 Posts

- 1.61K Comments

Yeah I also use config-as-code along with wiki but I used to remember things 10 years ago when the setup was simpler and the brain was newer. 😅

I’m here to serve.

77·5 days ago

77·5 days ago“I don’t think people realize that every single file Claude looks at gets saved and uploaded to Anthropic,” the researcher “Antlers” told us. “If it’s seen a file on your device, Anthropic has a copy.”

No shit.

3·5 days ago

3·5 days agoNo shit.

5·5 days ago

5·5 days agoIf only prices were related to costs … 😄

1·9 days ago

1·9 days agoI use Home Assistant (running on Yellow) with ZigBee, Matter/Thread and Z-Wave. If I had to start today, I’d get a Pi 4/5 and Home Assistant ZBT-2. I would not run anything else on the Pi. Let HA OS take it over to ensure smooth updates. Then I’d add ZWA-2 if I need Z-Wave. Not you’d need 2x ZBT-2 if you want both ZigBee and Matter/Thread.

8·11 days ago

8·11 days agoAlso they can now generate content without users, which they already do a lot on Facebook.

6·11 days ago

6·11 days agoVery interesting.

37·12 days ago

37·12 days ago“The thought had occasionally crossed my mind: ‘Are we building tools to replace ourselves?’ But I’d convinced myself we were just automating the mundane to free everyone up for more strategic work.

“That narrative came crashing down when thousands of colleagues no longer had jobs.”

Our industry is full of naive motherfuckers like this. I see them daily at work.

Whenever you see someone or yourself repeating corporate talking points, remember that the core interests of the corporation contradict yours. The corpo only has you there because it can’t make as much money without you. It only pays you as much because it can’t get away with paying you less. There’s plenty variance and nuance between cases but all that’s overlayed on top of the base interests, it doesn’t obviate or replace them. The core interests are enforced as times get tougher or there’s other opportunity that promises to further them against yours.

15·14 days ago

15·14 days agoIt’s funny how his calculation factors in the completely immaterial price of tokens variable instead the material one which is the number of tokens or better yet the productivity gain per token.

Does it come with nuclear weapons?

52·15 days ago

52·15 days agoWrong community. This is for c/selfhosting.

6·17 days ago

6·17 days agoI also enjoy discussing attack vecrors with my wife.

17·19 days ago

17·19 days agoEspecially now that helium supply has been cut by 30% by US-Israel.

5·19 days ago

5·19 days agoThis bud is going to get a visit from the MIC.

Missing from the meme is DJT asking Dada Xi for help.

So for peasants running Chairman Xi’s LLMs on local GPUs, we could try the largest model we can run and have it generate scripts to run instead of having the model do the actual processing of bulk data, to get more out of it.